Last semester, I taught computational biology for the first time at Southeastern (schedule, course materials). This is a little bit of a different ‘flavor’ of computational biology than a lot of the courses we see, since I’m not really a genomics person, but an evolutionary biologist, working in a department of mostly population (ecology, evolution, behavior) biologists. The audience was upper division undergrads and MS students, and one faculty member.

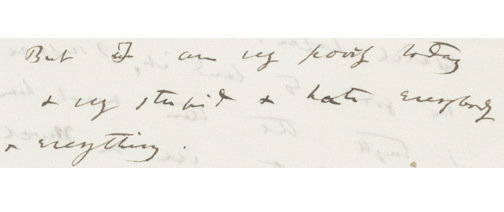

This semester, I decided to try something different than I have in the past, which is that I decided to forego installs at the start, and had them run everything in a JupyterHub. My blogpost on setting all that up is here. As I covered in that post, teaching undergraduates is different than graduate students. With graduate students, “I need to install this so I can analyze my data and get my MS/PhD” is a powerful motivator. They’re captive. My course is an elective. If the students feel super shitty and incapable after a day of installs, they can leave. And when they do, this is how they’ll feel:

Undergrads require a reframing of how we teach computation. The goal might not be that they have a laptop full of software ready to go, but that they learn something about computation, feel confident in those skills, and get to interact with some MS students and research active peers. So I didn’t start with installs. We did them at the end, for students who wanted to keep working in Python on their personal computers or the state HPC. This was very smooth.

I used a combination of Jupyter Notebooks and the Hub’s command line to teach. I’ve documented a lot of my thoughts on this choice here. Fundamentally, to me, the argument for notebooks boils down to this: Our competitor isn’t C++ or MatLab. It’s Excel. The retreat to the familiar. To get people working a little more reproducibly, and taking those first steps in computation, why take away all the nice interface bells and whistles they’re familiar with? Notebooks render well, they enable note taking, and data tables printed in a notebook look familiar.

Over the first month, we went through the Data Carpentry Python ecology materials. This by and large went great. I’m a maintainer on those materials, and using them in class lead to new pull requests from me, and has informed my own thinking on some of the issues and pull requests raised during the Spanish translation of the lesson.

One feedback that I got was that the first part of the course is very fast. I think next time, we’ll do 6 weeks on the really basic Python stuff. I’ll also split the first assessment into two pieces – one on the basic slicing operations, and one on functions and scripts. I kind of thought 4 weeks would be enough time to cover material that’s supposed to be covered in a 6-hour workshop. Alas.

The rest of the course, we do some querying of data from the web (Open Tree of Life, BLAST), phylogenetic computing with Dendropy, project management, and Git & GitHub. We also talk about Louisiana-specific stuff, like using the state supercomputer.

The final assessment was building Python packages, and doing teach-ins. Everyone did really well in the parameters of the assignment. The idea was that they would implement a couple functions in a package, document them, and then teach their lab (for the MS students) or the class (for the undergraduates) how to use the package. I self-doubted a little too much and let them re-use functions from earlier in class. For some reason, I thought I hadn’t shown them enough to do something totally novel. But it’s pretty clear from conversations after the fact that I could have aimed higher with this. Next time around, I’m going to structure my assessments like so:

1. Indexing, slicing, filtering data (Python in Pandas)

2. Functions and scripts

3. Querying the web, visualization

4. Making a Python package and putting it on GitHub

5. Teach-In

I was really worried about overwhelming them too early with assessment, but paradoxically made those concerns worse by holding off on the first assessment until too late.

Overall, I’m really happy with how the course went, and student evaluations suggest the learners were, too. For a first pass, I’m immensely satisfied. My second round with this course next fall will probably involve coming up with more biological narrative for the package making and GitHub steps.

Get Involved!

If you’re interested in any of this: I’m working on a SciPy proposal right now with Jeet Sukumaran. One of the things we would like to do is develop some Carpentries-style materials focused more towards phylogenetic data science – querying and cleaning data from the web, assembling phylogenetic datasets, processing MCMC output, visualization. We’d love collaborators!

I’m one of the hosts of iEvoBio this year, and the afternoon session will be on teaching computation biology. We’ll get started with lightning talks – 10 minutes on what you’re teaching, to whom, how you’re doing it, and what’s working about content delivery. Then, we’ll have a birds of a feather session where we try to write some of that info down to demystify course content delivery tech for instructors. We’ll put out a call for lightning talks soon. Feel free to get in touch early if you’re really keen!

I’ve also been having some conversations with the state supercomputing complex about making JupyterHubs available to host courses for free on those servers. If you might be interested (and are at an LA institution!), please get in touch.

Awesome blog you have hhere

LikeLike